A Practical AI Governance Framework for Mid-Market

A practical AI governance framework for mid-market organisations. Covers PIPEDA, Bill C-27, and a programme you can stand up in eight weeks.

Adam Nameh

April 14, 2026 · 9 min read

Every mid-market leader deploying AI faces the same friction: your team is already using AI tools, but you have no formal governance around them. The enterprise frameworks published by major consultancies assume you have a Chief AI Officer and a 12-month runway. You have neither.

A mid-market AI governance framework needs to be lightweight enough for one leader to own part-time, structured enough to satisfy regulators and auditors, and practical enough that people actually follow it. The good news is that the framework itself is not complicated. It has four layers, it can be stood up in eight weeks, and it starts producing value the moment you publish an acceptable use policy.

The hard part is not building the framework. It is balancing governance with adoption so that your team uses the approved path instead of routing around it.

Why governance matters more in 2026

AI adoption at mid-market companies has accelerated sharply. McKinsey's 2024 "The state of AI" survey found that 72 percent of organisations now use AI in at least one business function. That figure was 55 percent just one year prior. For mid-market teams, the implication is clear: your people are using AI whether you have a governance framework or not.

At the same time, Canada's regulatory landscape is catching up. Bill C-27, the Digital Charter Implementation Act, introduces AI-specific obligations that did not exist when most mid-market companies started experimenting with AI tools. The organisations that wait for regulation to force their hand will be scrambling. The ones that build a framework now will already be compliant.

Enterprise governance frameworks do not fit mid-market

Every major consultancy has published an AI governance framework. They are thorough. They are also built for organisations with a dedicated compliance team and a 12-month implementation timeline.

Mid-market companies have none of those things. You have a VP or Director who already owns three other priorities. You have a small IT team that is already stretched. You might not even have a formal data governance programme yet, let alone one for AI.

The enterprise frameworks are not wrong. They are just not built for you.

When a 500-person company tries to adopt a framework designed for 50,000, two things happen: the framework gets abandoned because it is too heavy, or it gets partially implemented in ways that create a false sense of security.

Meanwhile, your team is already pasting customer data into free tools, building workflows with personal accounts, and making decisions informed by models nobody audited. This is shadow AI, and it is the real risk. Not the absence of a 200-page policy document.

Four layers make a working AI governance programme

A practical AI governance framework for mid-market has four layers. Each one builds on the last.

Layer one: acceptable use policy. This is a single document, ideally one page, that defines what AI can and cannot be used for in your organisation. Can employees use AI to draft customer communications? To analyse financial data? To make recommendations about staffing?

The policy does not need to cover every scenario. It needs to draw clear lines on the obvious cases and establish a process for the grey areas.

Layer two: data classification. Not every piece of data carries the same risk. A simple three-tier system works: public data (marketing materials, public filings), internal data (operational metrics, project details), and restricted data (personal information, financial records, health data).

The classification determines what data can go into which AI systems. Restricted data never goes into a consumer-grade tool. Internal data requires an enterprise account with audit logging.

Layer three: tool governance. This means approved tools, enterprise accounts, and audit trails. If your team is going to use Claude, they use an enterprise account, not personal subscriptions. If they are building workflows, they use approved platforms with SSO and logging.

For teams considering on-premise options for restricted data, our on-premise LLM page covers the tradeoffs. This layer is about visibility, not control.

Layer four: review and graduation. How does an AI-built workflow move from experiment to production? Someone builds a draft-generation tool using an approved AI platform. It works well. Now what?

The review process defines what "ready for production" means: accuracy thresholds, human review requirements, data handling compliance, and ongoing monitoring. Our citizen developer enablement playbook covers how to support the people building in layers three and four without killing their momentum.

What do PIPEDA and Bill C-27 require for AI?

Canadian companies operating with AI need to understand two pieces of legislation.

PIPEDA, the Personal Information Protection and Electronic Documents Act, governs how private-sector organisations collect, use, and disclose personal information. It has been in force since 2000. The core principles are consent, purpose limitation, and accountability. If you are putting personal information into an AI system, PIPEDA applies.

Bill C-27 introduces the Artificial Intelligence and Data Act (AIDA), which adds AI-specific requirements. The bill establishes transparency obligations for high-impact AI systems: systems that affect decisions about employment, access to services, or other significant outcomes for individuals. If your AI system influences hiring decisions, credit assessments, or customer eligibility, you will need to document how it works and what data it uses.

For mid-market AI governance in Canada, the practical implications are straightforward. Know what data you are feeding into AI systems. If it includes personal information, you need consent and a clear purpose. Keep audit logs so that if a decision was informed by AI, you can explain how.

Maintain a human in the loop for decisions that materially affect individuals. Automated recommendations are fine. Fully automated consequential decisions are where the risk concentrates. Finally, maintain a registry that lists each AI system, what it does, what data it uses, and who owns it.

In our engagements with mid-market teams, we have found that the four-layer framework above, applied with awareness of where personal data flows, satisfies these requirements without requiring a compliance department. Deloitte's "State of AI in the Enterprise" survey reinforces this: organisations that embed governance into their deployment process from the start report fewer compliance incidents than those that retrofit governance after the fact.

You can build the framework in eight weeks

The mistake is trying to build all four layers at once. Sequence them instead.

Weeks one and two: acceptable use policy. Draft it, circulate it, get leadership sign-off. One page. This is the fastest way to move from "no governance" to "some governance," and it immediately reduces your highest risk: people using AI in ways the organisation has not sanctioned.

Weeks three and four: data classification. Audit your data sources. Assign each one a tier. Map which AI tools are approved for which tiers.

This connects directly to the data inventory work in our Data Readiness service. If you have already been through that engagement, you have most of this done.

Weeks five and six: tool governance. Migrate from personal accounts to enterprise accounts. Set up SSO. Enable audit logging. This is an IT project, and it is usually simpler than people expect.

Most major AI platforms support enterprise deployment with standard identity providers. We delivered an AI enablement roadmap for a housing services organisation that included this migration as part of the initial sprint.

Weeks seven and eight: review process. By now, your team has been building under the first three layers. You have real workflows to evaluate. Define what "production-ready" means and apply that standard to whatever has been built. Our Discovery service often produces the initial use case inventory that feeds directly into this review layer.

Eight weeks. One leader owning it part-time. That is the timeline for a working AI governance programme, not a theoretical one.

Governance that kills adoption is worse than no governance

Here is the tension nobody talks about enough. Governance that is too heavy kills adoption. People route around it.

They go back to personal accounts and free tools because the approved path has too much friction. You end up with a governance framework that looks good on paper and governs nothing.

No governance is worse. Sensitive data ends up in systems you do not control. Decisions get made based on outputs nobody validated. When something goes wrong, and eventually it will, you have no audit trail.

The balance is design, not policy. Make the governed path easier than the ungoverned path. If the approved AI tool requires seven clicks and a justification form, but a personal account requires zero, you have already lost.

Enterprise accounts should be pre-provisioned. Templates should be ready. Access should be instant.

The organisations that get AI governance right treat it as a product, not a compliance exercise. They measure adoption of governed tools the same way they measure adoption of any internal system. Low adoption is not a people problem. It is a design problem.

Governance exists to reduce risk. But the biggest risk is not that people use AI irresponsibly. It is that they use it invisibly.

A governance framework that drives AI usage underground is worse than no framework at all. Build one that people actually want to use, and start with a Discovery engagement to understand where AI is already showing up in your organisation.

Frequently Asked Questions

What is an AI governance framework for mid-market companies?

An AI governance framework for mid-market companies is a lightweight programme that defines acceptable AI use, classifies data by risk, governs which tools are approved, and establishes a review process for AI-built workflows. Unlike enterprise frameworks, it is designed to be owned by a single leader and stood up in weeks.

Does PIPEDA apply to AI usage in Canadian companies?

Yes. PIPEDA governs how private-sector organisations collect, use, and disclose personal information. If your AI systems process personal information, such as customer records, employee data, or health information, PIPEDA's consent, purpose limitation, and accountability requirements apply.

What does Bill C-27 mean for mid-market AI deployments?

Bill C-27 introduces the Artificial Intelligence and Data Act (AIDA), which adds transparency obligations for high-impact AI systems, meaning systems that affect employment, credit, or service eligibility decisions. Mid-market companies using AI in these areas will need to document how the system works and what data it uses.

How long does it take to build an AI governance framework?

A practical framework can be stood up in eight weeks when sequenced properly: acceptable use policy in weeks one and two, data classification in weeks three and four, tool governance in weeks five and six, and a review process in weeks seven and eight. One leader owning the process part-time is sufficient.

How do I prevent shadow AI while encouraging AI adoption?

Make the governed path easier than the ungoverned path. Pre-provision enterprise accounts, provide ready-made templates, and ensure access is instant. If using the approved tool requires more effort than pasting into a personal account, people will route around the governance framework.

Adam Nameh

Co-Founder & Data Platform Architect. Adam Nameh is the Co-Founder of Alphabyte Solutions Inc., a Toronto-based data and AI consulting firm that has helped over 100 clients across North America transform complex data environments into actionable business intelligence. With a decade of hands-on experience in data architecture and platform design, Adam works directly with leadership teams to deliver practical AI and data solutions that drive real business outcomes.

View full profile →More from the blog

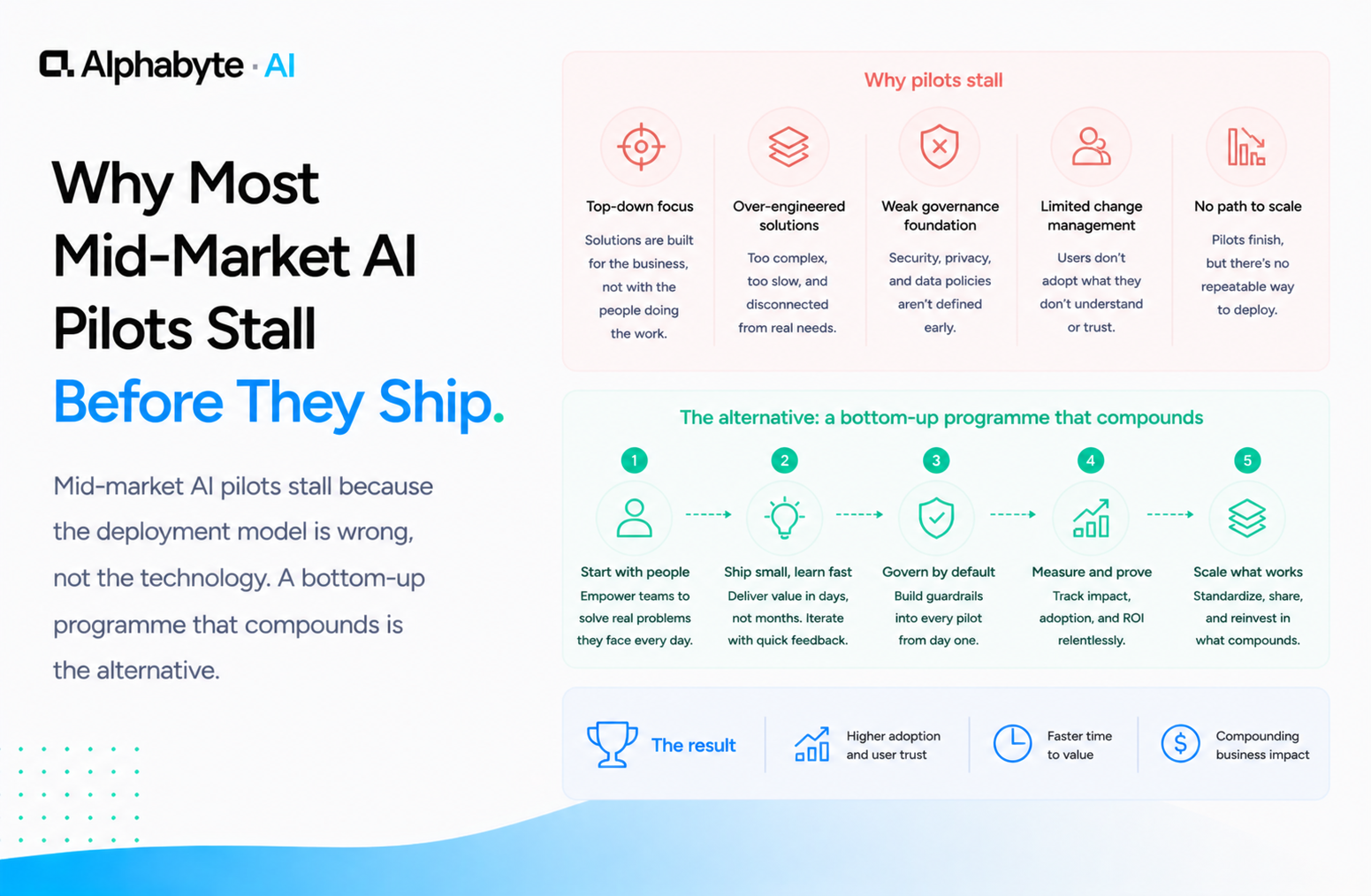

Why Most Mid-Market AI Pilots Stall Before They Ship

Mid-market AI pilots stall because the deployment model is wrong, not the technology. A bottom-up programme that compounds is the alternative.

Read more →

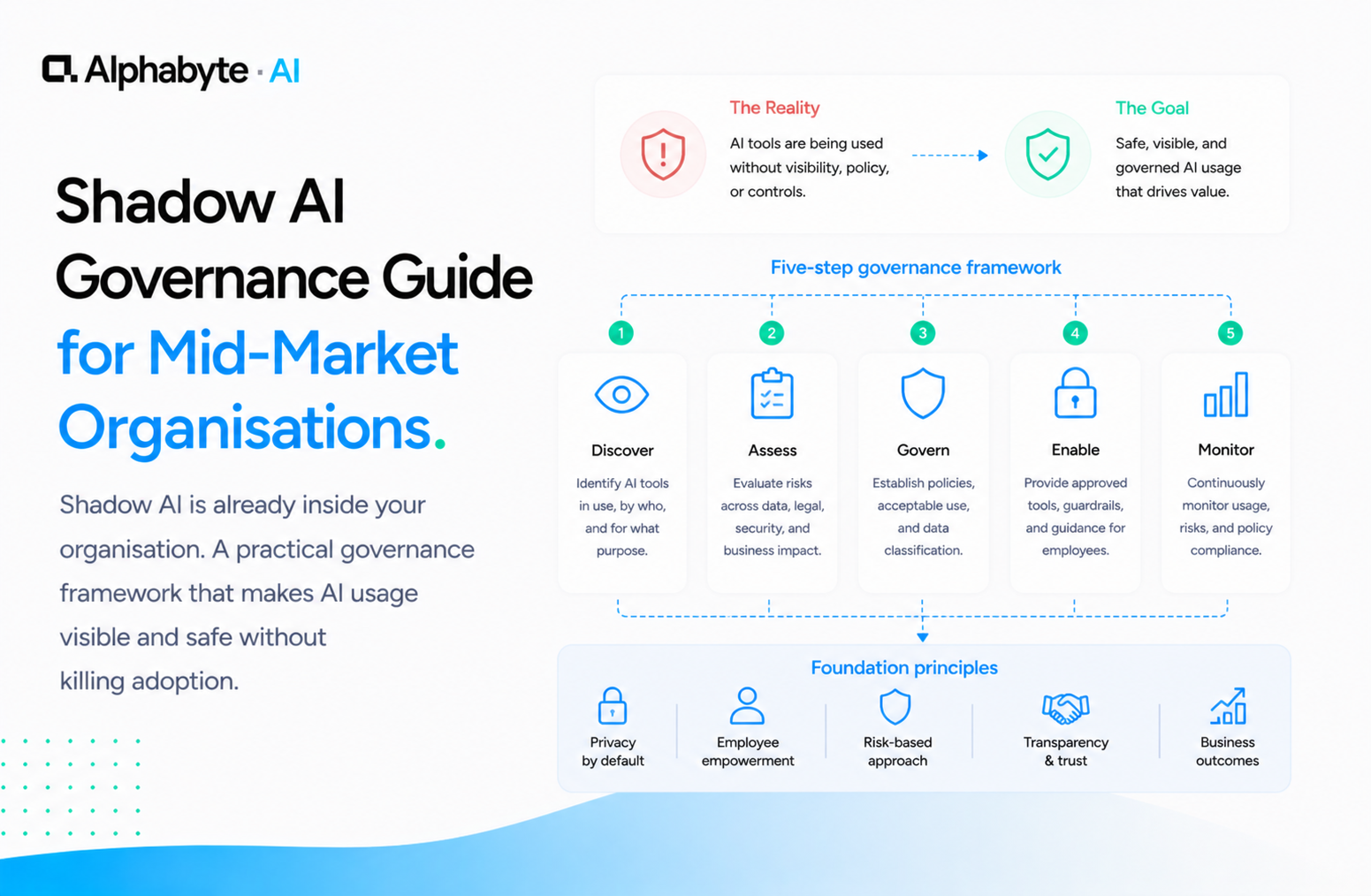

Shadow AI Governance Guide for Mid-Market Organisations

Shadow AI is already inside your organisation. A practical governance framework that makes AI usage visible and safe without killing adoption.

Read more →

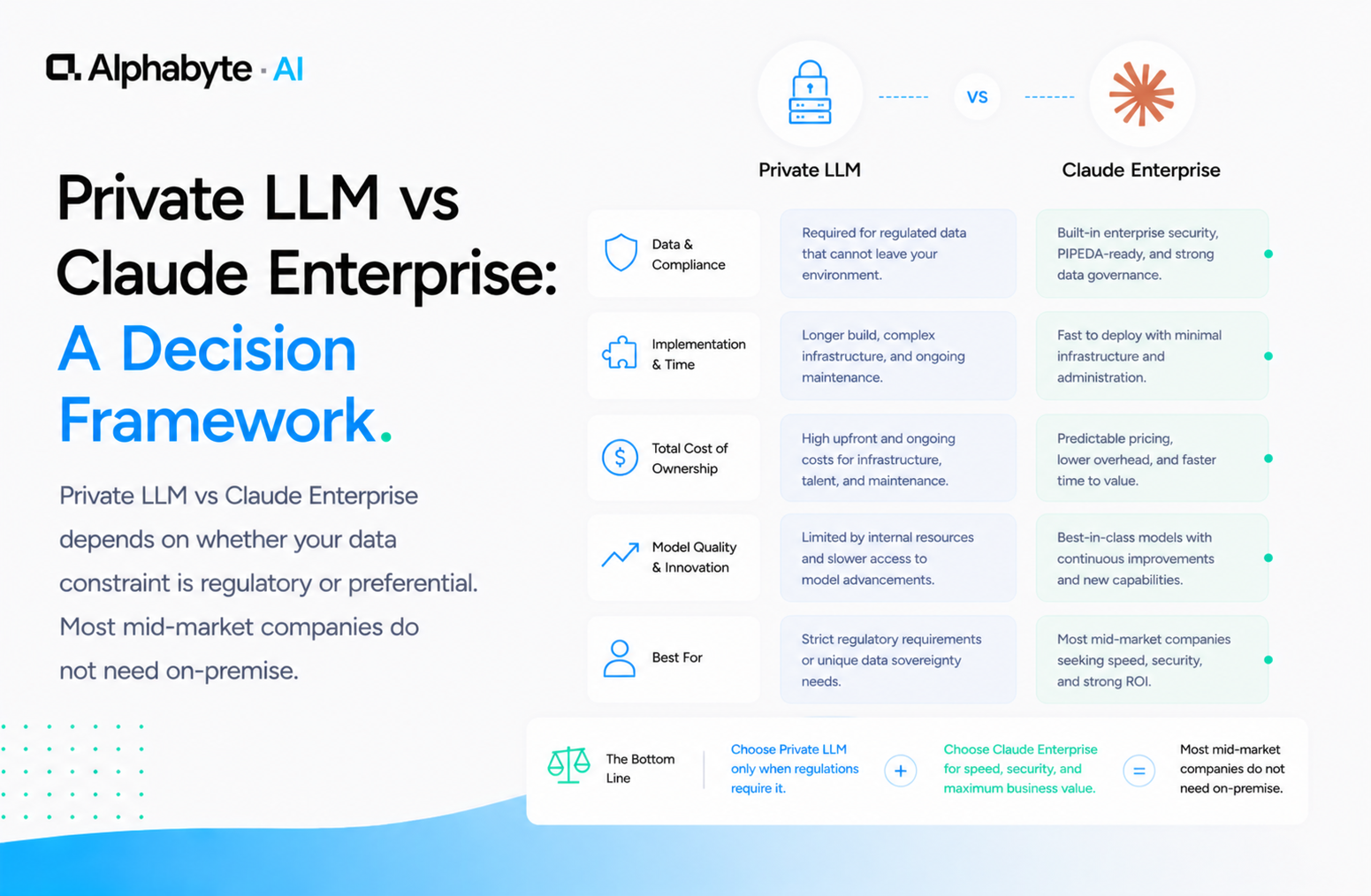

Private LLM vs Claude Enterprise: A Decision Framework

Private LLM vs Claude Enterprise depends on whether your data constraint is regulatory or preferential. Most mid-market companies do not need on-premise.

Read more →