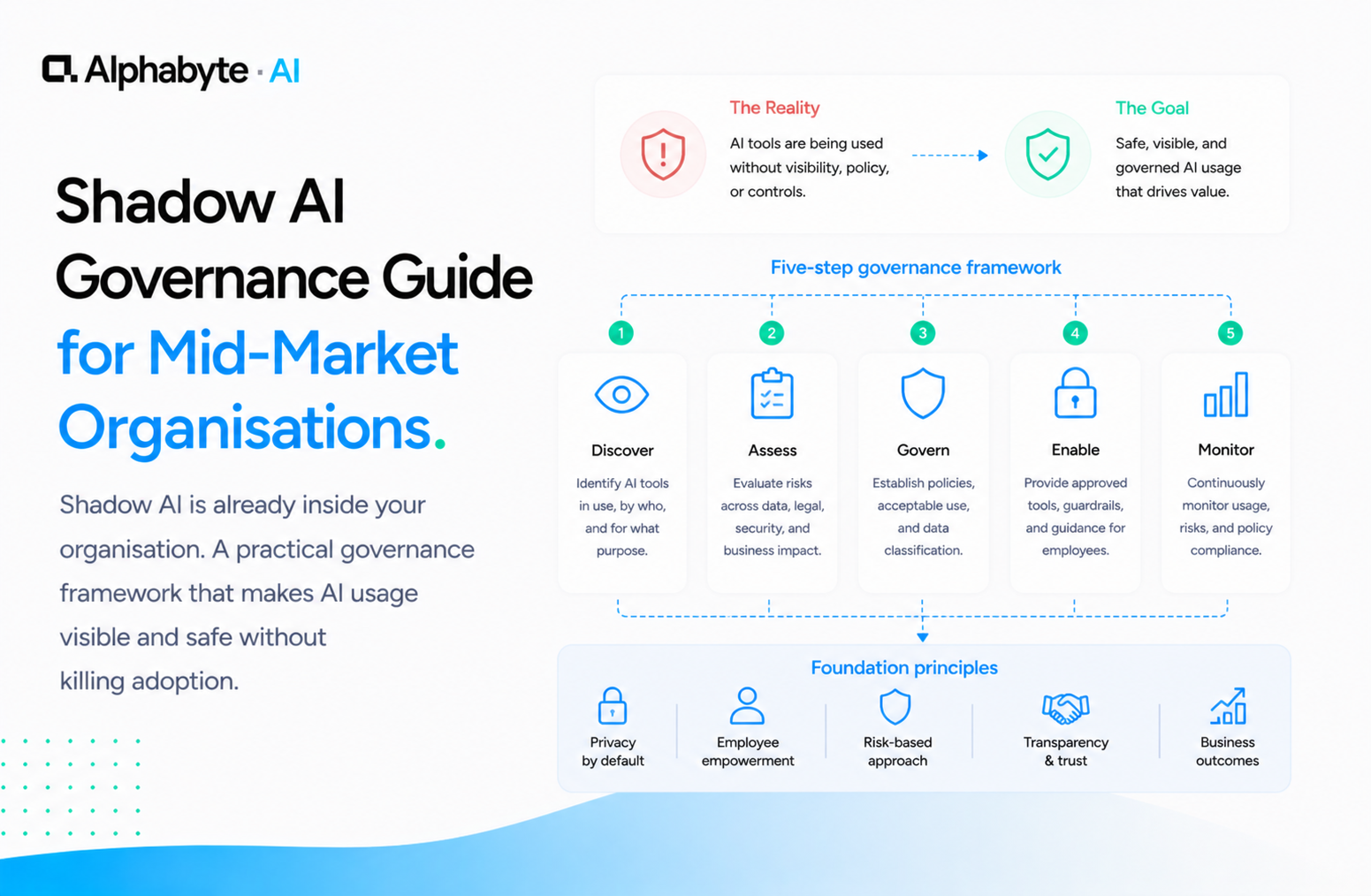

Shadow AI Governance Guide for Mid-Market Organisations

Shadow AI is already inside your organisation. A practical governance framework that makes AI usage visible and safe without killing adoption.

Adam Nameh

May 5, 2026 · 8 min read

Your employees are already using AI on personal accounts your IT team cannot see. The question is not whether shadow AI exists in your organisation, but whether you govern it before it creates a compliance incident.

Shadow AI governance does not require banning personal tools or restricting productivity. It requires making existing usage visible, providing a governed alternative that is at least as capable, and migrating workflows into an environment you control. The goal is to bring existing productivity into a governed environment, not to eliminate it.

Organisations that treat shadow AI as an adoption signal rather than a compliance failure move faster and retain their most productive people. The Discovery service we run with mid-market teams starts with exactly this assessment.

Most mid-market organisations already have a shadow AI problem

The pattern is predictable. An employee discovers that AI saves two or three hours a week on a recurring task. They sign up for a personal account, start using it, and build workflows around it. Sometimes they share tips with colleagues in Slack threads or over lunch. None of this is visible to IT.

According to McKinsey's 2024 "The state of AI" survey, 72 percent of organisations now report AI adoption, up from 55 percent the prior year. But much of that adoption happens outside formal programmes. At mid-market scale, that means dozens or hundreds of employees with ungoverned AI workflows touching real business data every day.

The usage is not occasional or experimental. These are weekly workflows that people depend on, and each one represents data flowing outside your governance perimeter.

The data flowing into consumer AI accounts (customer names, financial figures, internal strategies, proprietary processes) is governed only by whatever terms of service the employee accepted on a personal credit card. Under Canadian privacy legislation like PIPEDA and the proposed Bill C-27, this creates real compliance exposure. The employee may not even realise they are creating a liability. They are solving a problem, and the consumer tool was the fastest path to a solution.

In our engagements, we have seen this pattern at every mid-market organisation we assess. When we delivered an AI enablement roadmap for a housing services organisation, the first finding was that multiple teams had already built personal AI workflows for reporting and document review.

The risk was not that employees were using AI. The risk was that nobody could see it, control it, or account for what data was leaving the organisation.

Banning AI tools makes the problem worse

The instinctive response is to issue a policy, block the URLs, and declare the problem solved. It is not solved. Three things happen when organisations ban AI tools.

First, usage goes underground. Employees switch to mobile devices, personal laptops, or tools you have not thought to block. You lose whatever limited visibility you had. The data exposure continues; you just stopped hearing about it.

Second, you lose your best people's best work. The employees using AI are not your underperformers. They are the ones who figured out how to draft a proposal in 20 minutes instead of two hours, who automated weekly reporting, who found a way to analyse data they previously could not touch.

Banning AI penalises initiative and tells your most resourceful people that efficiency is not valued. In our engagements, we have seen top-performing analysts and operations leads quietly revert to manual processes after a ban, losing hours per week they had already reclaimed.

Third, you fall behind. Deloitte's "State of AI in the Enterprise" survey consistently finds that organisations with formal AI governance programmes scale adoption faster than those without. Every month spent on prohibition is a month your competitors spend on adoption. The gap compounds.

The better question is not "how do we stop this" but "how do we make this visible and safe." Our citizen developer enablement playbook addresses the adoption side of this equation in detail. For teams already dealing with ungoverned usage, our shadow AI assessment maps the current landscape before any governance decisions are made.

A four-step governance path that preserves adoption

Governance does not have to mean restriction. Done well, it means your team keeps doing what they are already doing, but on infrastructure you can see and control. The path has four steps.

Acknowledge the usage. Start by understanding what is already happening. Survey your teams. Ask what tools they are using, what tasks they are automating, what data they are working with. This is not an audit; it is discovery. You need to know the landscape before you can govern it. Our Discovery service includes a shadow AI assessment as part of the initial sprint.

Provide a governed alternative. Deploy enterprise AI accounts with SSO, data retention policies, and audit logging. Anthropic's enterprise offering provides all three. The key is that the governed alternative must be at least as capable as what people are already using. If the enterprise tool is slower, more restricted, or harder to access, people will stay on their personal accounts.

We have seen this firsthand: one organisation deployed an enterprise AI tool with a restrictive usage policy, and adoption stalled at under 15 percent. The personal accounts stayed active because the governed option felt like a downgrade.

Migrate existing workflows. This is the step most organisations skip. It is not enough to provision enterprise accounts and send a memo. You need to actively help teams move their existing workflows into the governed environment.

The employee who built a content workflow on a personal Claude account needs help rebuilding it on the enterprise account, with the same functionality plus the governance layer. Our citizen development service handles this migration.

Build guardrails that enable. Define what data can and cannot be shared with AI tools. Set up data loss prevention rules. Configure retention policies.

A guardrail that says "customer PII must be anonymised before AI processing" is better than one that says "no customer data in AI tools." The first gives people a path forward. The second sends them back to personal accounts.

The distinction matters: enabling guardrails increase usage of the governed platform, while restrictive guardrails push usage back to ungoverned tools. We have written about how to build governance frameworks for mid-market organisations that balance security with usability.

What governed AI usage looks like in practice

In a governed environment, every employee accesses AI through your enterprise account, authenticated via SSO. No personal accounts, no consumer tools, no data leaving your environment through side channels.

Every interaction is logged (not to surveil employees, but to understand usage patterns, identify high-value workflows, and maintain an audit trail for compliance).

Data stays in your tenant. Conversations, uploaded files, and generated outputs are retained according to your policies and deleted according to your schedules. Your data is not used to train models.

Guardrails operate at the platform level: sensitive data categories are flagged automatically, and certain types of queries are routed or restricted based on the user's role and the data classification.

The difference for the employee is minimal. They still use AI in the same way. They still get the same quality of output.

The difference for the organisation is significant: full visibility, full control, and a defensible answer when a regulator or client asks how you handle AI and data privacy. For organisations in regulated industries, this shift from invisible consumer usage to visible governed usage is not optional. It is a requirement that grows more urgent as Canadian privacy legislation evolves.

The workflows your team built on personal accounts still work. The analyst still gets their weekly data summary. The operations lead still automates their reporting.

The difference is that now you can see it, support it, and build on it. When a regulator or auditor asks how your organisation uses AI, you have an answer.

In our engagements, we have seen organisations complete this transition in weeks, not months. The construction firm we worked with on compliance intelligence moved from ungoverned personal AI usage to a fully governed environment with custom MCP connections to their regulatory code library. The team kept their productivity gains. The organisation gained visibility and control.

Shadow AI is an adoption signal. The usage is already there. The only question is whether it works for your organisation or against it. If you are ready to make it visible and safe, start with a Discovery call.

Frequently Asked Questions

What is shadow AI in a mid-market company?

Shadow AI refers to employees using personal AI accounts (ChatGPT, Claude, or others) for work tasks without IT visibility or governance. It means business data flows through consumer tools with no audit trail, no data retention controls, and no alignment with your organisation's security policies.

Why does banning AI tools not work?

Bans push usage underground. Employees switch to mobile devices or personal laptops, and you lose whatever limited visibility you had. The data exposure continues while your most productive people are penalised for taking initiative. Competitors who govern rather than ban gain ground every month.

How do you govern shadow AI without slowing teams down?

Deploy an enterprise AI environment with SSO, audit logging, and data retention policies. Then actively migrate existing personal workflows into the governed platform. The governed alternative must be at least as capable as what people already use, or they will stay on personal accounts.

What data risks does shadow AI create?

Employees paste customer names, financial figures, internal strategies, and proprietary processes into consumer AI tools. That data is governed only by the consumer terms of service, not your organisation's policies. Under regulations like PIPEDA and Bill C-27, this creates compliance exposure your legal team may not be aware of.

Adam Nameh

Co-Founder & Data Platform Architect. Adam Nameh is the Co-Founder of Alphabyte Solutions Inc., a Toronto-based data and AI consulting firm that has helped over 100 clients across North America transform complex data environments into actionable business intelligence. With a decade of hands-on experience in data architecture and platform design, Adam works directly with leadership teams to deliver practical AI and data solutions that drive real business outcomes.

View full profile →More from the blog

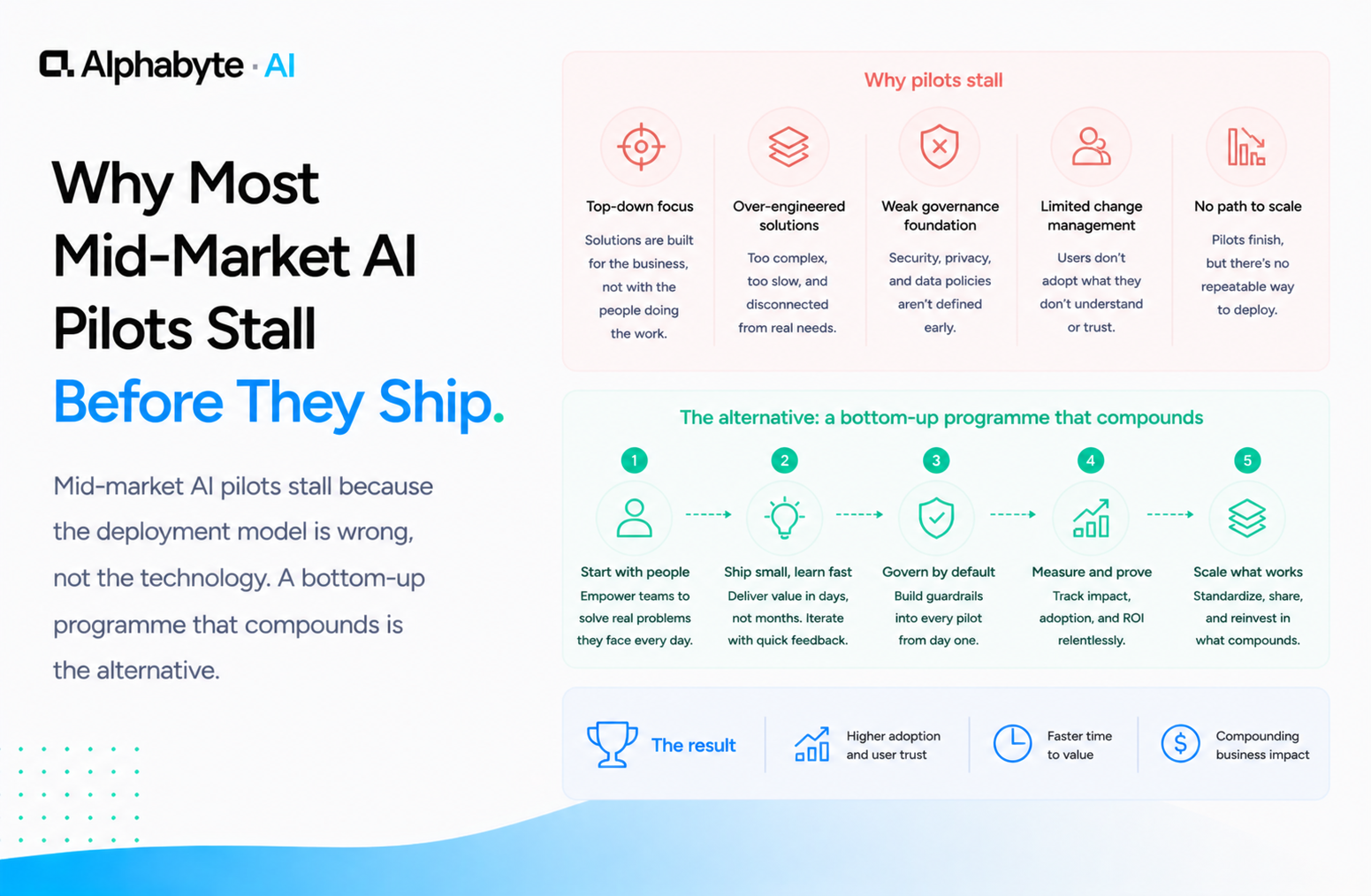

Why Most Mid-Market AI Pilots Stall Before They Ship

Mid-market AI pilots stall because the deployment model is wrong, not the technology. A bottom-up programme that compounds is the alternative.

Read more →

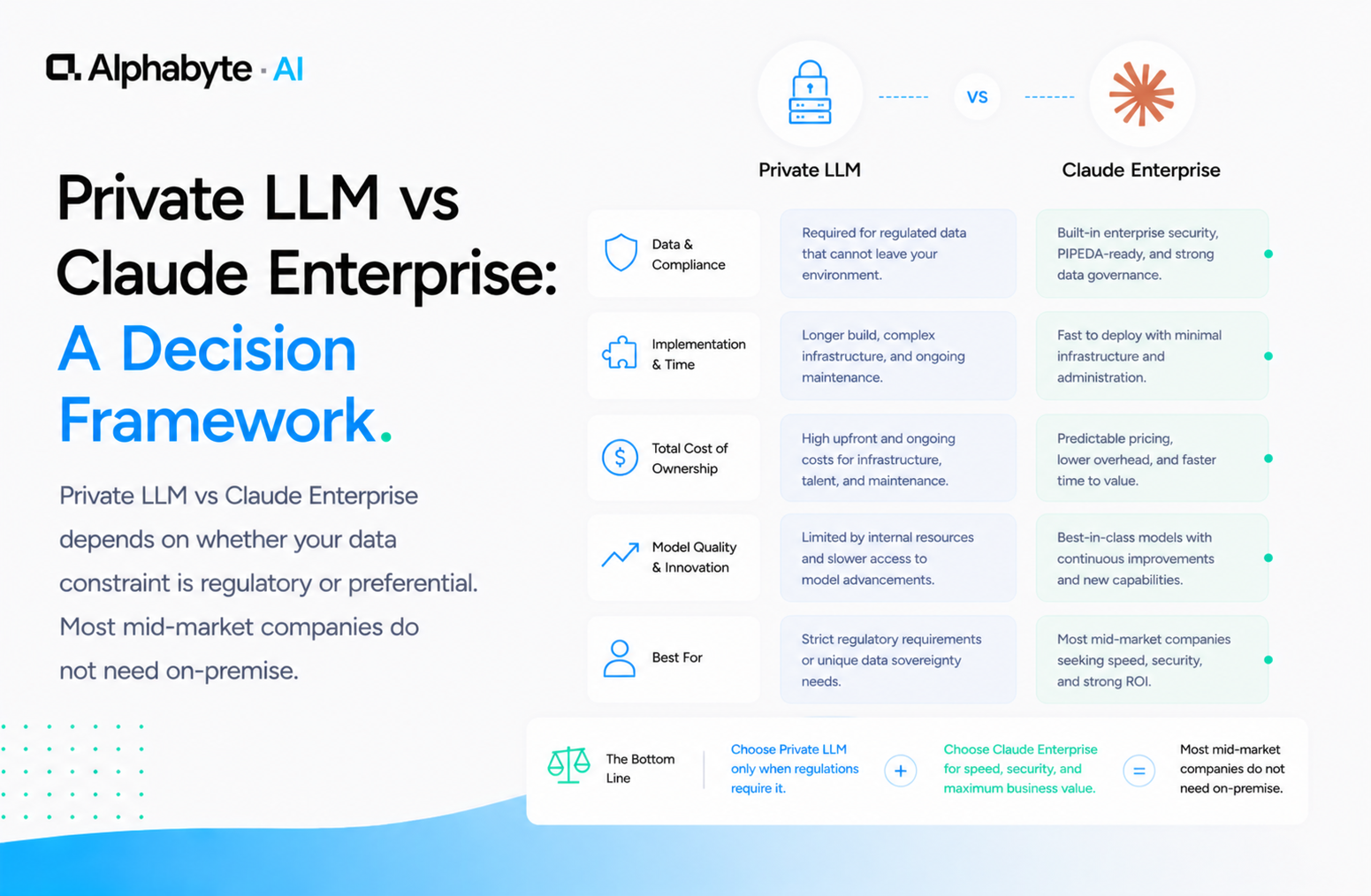

Private LLM vs Claude Enterprise: A Decision Framework

Private LLM vs Claude Enterprise depends on whether your data constraint is regulatory or preferential. Most mid-market companies do not need on-premise.

Read more →

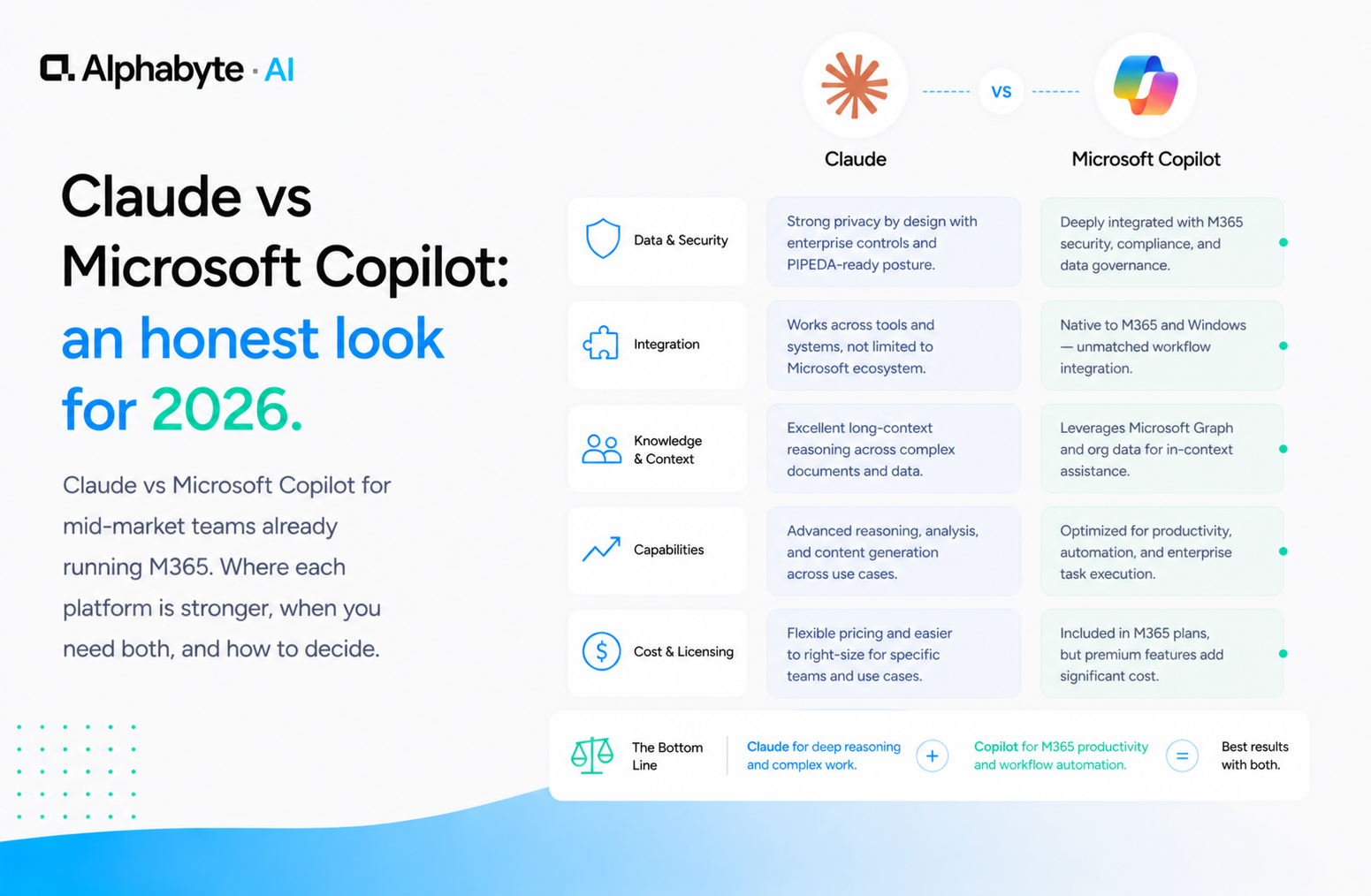

Claude vs Microsoft Copilot: an honest look for 2026

Claude vs Microsoft Copilot for mid-market teams already running M365. Where each platform is stronger, when you need both, and how to decide.

Read more →