Private LLM vs Claude Enterprise: A Decision Framework

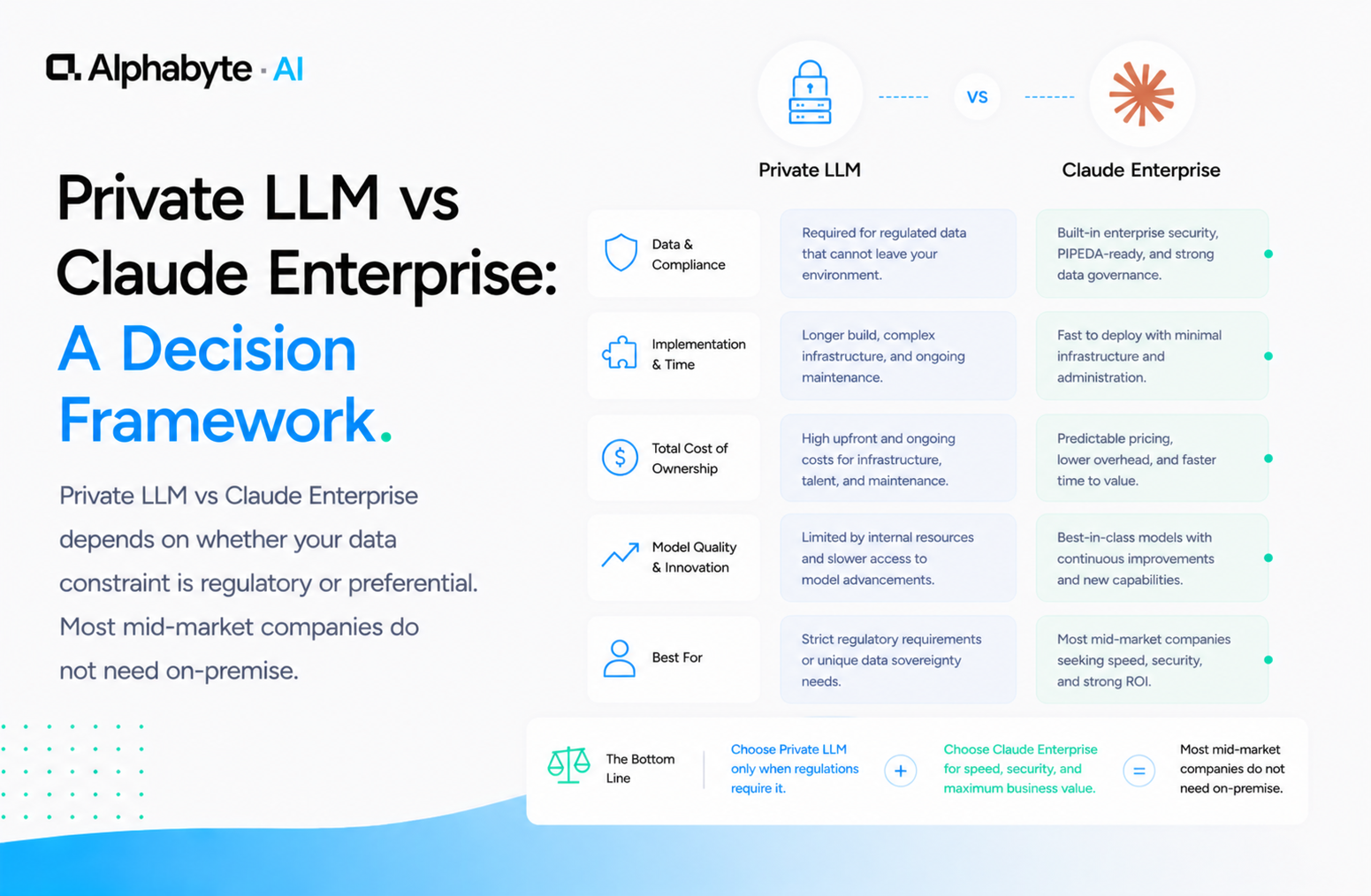

Private LLM vs Claude Enterprise depends on whether your data constraint is regulatory or preferential. Most mid-market companies do not need on-premise.

Mitchell Makos

May 1, 2026 · 8 min read

"Our data cannot leave our network." We hear this on every third discovery call. Sometimes it is a genuine regulatory requirement. Sometimes it is an organisational preference inherited from a prior security review that predates how cloud AI actually handles data.

The distinction matters. A genuine compliance mandate is a hard constraint that points toward on-premise infrastructure. An organisational preference is a policy decision that can be revisited when the facts change.

Most mid-market companies fall into the second category. They assume cloud AI means their proprietary data enters a training pipeline and becomes part of a public model. That assumption is outdated. Before committing to the cost and complexity of a private LLM, it is worth examining whether the constraint is regulatory or preferential.

Why this matters now

The cost gap between on-premise LLM infrastructure and cloud AI has widened, not narrowed. GPU hardware costs remain high, and the capability gap between self-hosted open-weight models and frontier models like Claude continues to grow.

Anthropic's enterprise offerings now include zero-retention processing and SOC 2 Type II compliance, which satisfy the data handling requirements that most mid-market companies actually face. Meanwhile, Canadian organisations preparing for Bill C-27 (the Digital Charter Implementation Act) need to understand what their specific compliance obligations actually require, rather than defaulting to on-premise because it sounds safer.

Is Your Data Constraint Regulatory or Preferential?

The first question in the private LLM decision is whether your data sovereignty requirement comes from a regulator or from an internal policy. These are fundamentally different constraints with different implications.

A regulatory constraint is specific and verifiable. Your regulator requires data residency within a specific jurisdiction. Your contract with a government agency prohibits third-party processing. An audit finding requires that certain data categories never leave your physical infrastructure.

These are hard constraints. If your compliance officer has identified a specific regulation, clause, or audit finding, the conversation about on-premise is straightforward.

A preferential constraint is an organisational policy, often written before the current generation of cloud AI data handling existed. "We do not send data to third parties" is a common policy. But it was written when "sending data to a third party" meant that data would be stored, indexed, and potentially accessible indefinitely. That is not how Claude Enterprise works.

In our engagements, we have seen this distinction matter repeatedly. A housing services organisation we worked with had a blanket policy against cloud AI. When we reviewed the actual regulatory requirements with their compliance team, the mandate was about data retention, not data processing.

Claude Enterprise's zero-retention model satisfied the requirement without any on-premise infrastructure. If you are building a governance framework for the first time, our AI governance framework for mid-market post walks through how to distinguish between regulatory and preferential constraints.

Deloitte's "State of AI in the Enterprise" survey found that data privacy and security concerns remain the most cited barrier to AI adoption. Organisations which conducted specific policy reviews, rather than applying blanket restrictions, were significantly more likely to move into production deployment.

Claude Enterprise Already Provides Zero-Retention Processing

Here is what Claude Enterprise's data handling actually looks like:

- Zero data retention. Your prompts and outputs are not stored after processing. Your data is not used for model training.

- SOC 2 Type II compliance. Anthropic maintains independent audits of its security controls.

- SSO and SCIM provisioning. Your identity provider controls access. Offboarded employees lose access immediately.

- Admin controls and audit logging. You can see who is using the system, what they are accessing, and enforce usage policies centrally.

The common misconception is that sending data to an API means that data lives on someone else's server indefinitely. With Claude Enterprise, the data is processed and returned. It is not stored, not indexed, not used for any purpose beyond generating your response. Anthropic's data handling documentation provides the full technical specification.

For most mid-market companies, this meets the compliance bar. Professional services firms, manufacturers, logistics operators, and financial advisors all operate under data handling requirements that zero-retention processing satisfies.

That is a stronger data handling posture than most companies maintain for their own internal systems. The question is not whether the cloud is secure enough. It is whether your on-premise alternative would actually be more secure than what Anthropic already provides.

For Canadian organisations, PIPEDA requires that personal information be protected by appropriate safeguards during processing. Zero-retention processing, SOC 2 compliance, and administrative access controls satisfy this standard for most use cases. The shadow AI governance risk is often greater: employees using ungoverned consumer AI tools with no data handling guarantees at all.

When Does On-Premise Actually Make Sense?

There are legitimate cases where a private LLM is the right answer. They are less common than most people assume, but they are real.

Explicit data residency mandates. Defence contractors operating under ITAR. Healthcare organisations subject to specific provincial or state-level data residency laws. Government agencies with security requirements that no commercial AI provider yet satisfies. If your regulator says the data cannot leave your physical infrastructure, that is the end of the conversation.

Air-gapped environments. If your network has no external connectivity by design, common in classified environments and certain critical infrastructure, cloud AI is not an option regardless of its security posture.

High inference volume. If you are running millions of inference calls per month against a specific, narrow use case, self-hosting a smaller model can become cost-competitive. The math only works at significant scale, and the model will be less capable than Claude, but the economics can justify it.

Specialised fine-tuning requirements. If your use case requires a model trained on proprietary data, such as medical imaging analysis or specialised document classification, a self-hosted fine-tuned model may outperform a general-purpose model on that narrow task. This applies primarily to organisations with large, domain-specific training datasets and the ML engineering staff to maintain the fine-tuning pipeline.

McKinsey's 2024 "The state of AI" survey noted that only a minority of organisations reported deploying self-hosted models, and that the primary driver in nearly every case was a specific regulatory mandate rather than a general security preference. For more detail on what the Infrastructure service involves when on-premise is the right call, that page covers the full engagement model.

How Should You Decide Between Private LLM and Cloud AI?

Here is the decision tree we walk through with clients.

First: is this regulatory or preferential? Do you have a specific regulation, contract clause, or audit finding that requires data to remain on your infrastructure? Or is it an internal policy that predates the current generation of cloud AI data handling? If it is preferential, revisit the policy with current facts before committing to on-premise infrastructure.

Second: does zero-retention satisfy the requirement? If the mandate is "our data cannot be used for training" or "our data cannot be stored by a third party," Claude Enterprise already meets that standard. Many compliance requirements are satisfied by zero-retention processing. Read the actual regulation, not the summary someone wrote three years ago.

Third: what is the total cost of ownership? On-premise LLM infrastructure is not a one-time purchase. You need GPU hardware (or a dedicated cloud partition), ongoing maintenance, model updates, inference optimisation, and staff who understand the stack. For a mid-market company, that is typically $200K to $500K in year one and $100K or more annually after that. Compare that against API-based pricing that scales with actual usage.

Fourth: is the capability gap acceptable? On-premise models are smaller. A self-hosted 70B parameter model is capable, but it is not Claude. If your use cases require strong reasoning, long-context understanding, or nuanced language generation, the gap is significant.

We saw this directly when building an executive productivity suite with purpose-built Claude agents: the quality of Claude's reasoning on complex cross-system queries was materially better than what open-weight alternatives produced.

Most mid-market companies land on Claude Enterprise. The ones that genuinely need on-premise know it before they finish reading this post, because their compliance officer already told them.

In our engagements, the private LLM conversation usually resolves in the first week. The actual data handling requirements, once reviewed against current cloud AI safeguards, rarely require on-premise. The more productive conversation is about which systems to connect to Claude first and how to govern the rollout.

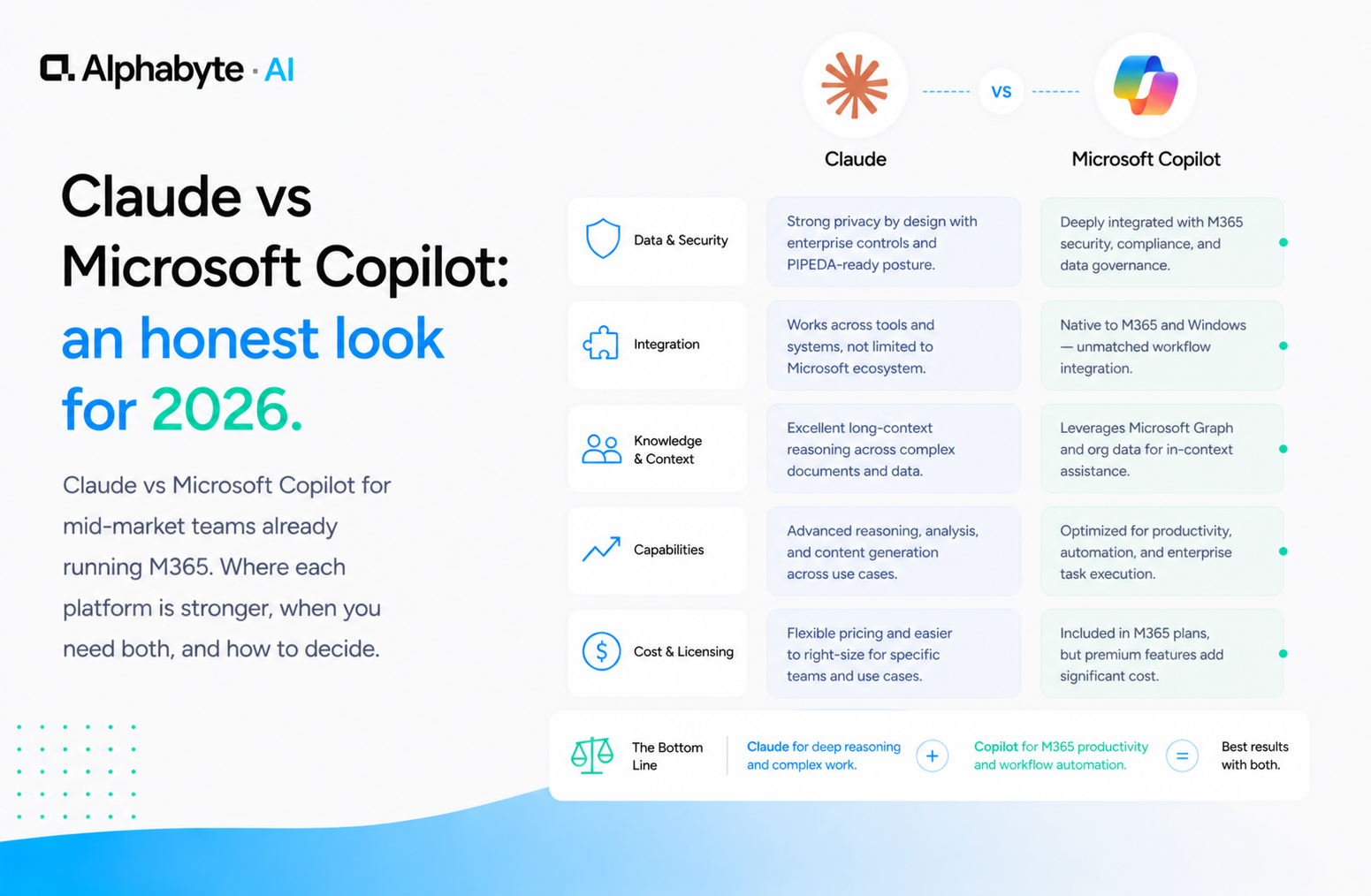

If you are still deciding, a Discovery engagement is where we help you map the specific requirements to the right infrastructure model. If you are comparing Claude to other cloud options, our Claude vs ChatGPT Enterprise and Claude vs Microsoft Copilot comparisons cover that ground.

Frequently Asked Questions

Does Claude Enterprise store my data?

No. Claude Enterprise operates with zero data retention. Your prompts and outputs are not stored after processing and are not used for model training. Anthropic maintains SOC 2 Type II compliance with independent audits of its security controls.

How much does an on-premise LLM cost for a mid-market company?

On-premise LLM infrastructure typically costs between 200,000 and 500,000 dollars in the first year, including GPU hardware or a dedicated cloud partition, setup, and integration. Ongoing costs run 100,000 dollars or more annually for maintenance, model updates, and specialised staff. Compare this against API-based pricing that scales with actual usage.

Is a private LLM more secure than Claude Enterprise?

Not necessarily. Claude Enterprise provides zero data retention, SOC 2 Type II compliance, SSO integration, and audit logging. Many mid-market companies maintain weaker security controls for their own internal systems than what Anthropic provides. The question is whether your specific compliance requirement mandates data residency on your own infrastructure.

When should a company choose an on-premise LLM?

On-premise makes sense in four situations: explicit data residency mandates from a regulator, air-gapped environments with no external connectivity, high inference volume where millions of monthly calls make self-hosting cost-competitive, and specialised fine-tuning requirements where a model trained on proprietary data outperforms a general-purpose model on a narrow task.

Can Claude Enterprise meet Canadian privacy requirements under PIPEDA?

For most mid-market companies, Claude Enterprise's zero-retention processing, SOC 2 Type II compliance, and administrative controls satisfy the requirements under PIPEDA. The data is processed and returned without being stored, indexed, or used for any other purpose. Companies subject to stricter sector-specific mandates should review the specific regulation with legal counsel.

Mitchell Makos

Principal Architect & AI Delivery Lead. Mitchell is a senior platform architect and full-stack engineer with a decade of experience shipping production systems at enterprise scale — from IBM Cloud Garage to Hertz, NCR, Fidelity, Royal Bank of Canada, Pacific Gas & Electric, and Erie Insurance.

View full profile →More from the blog

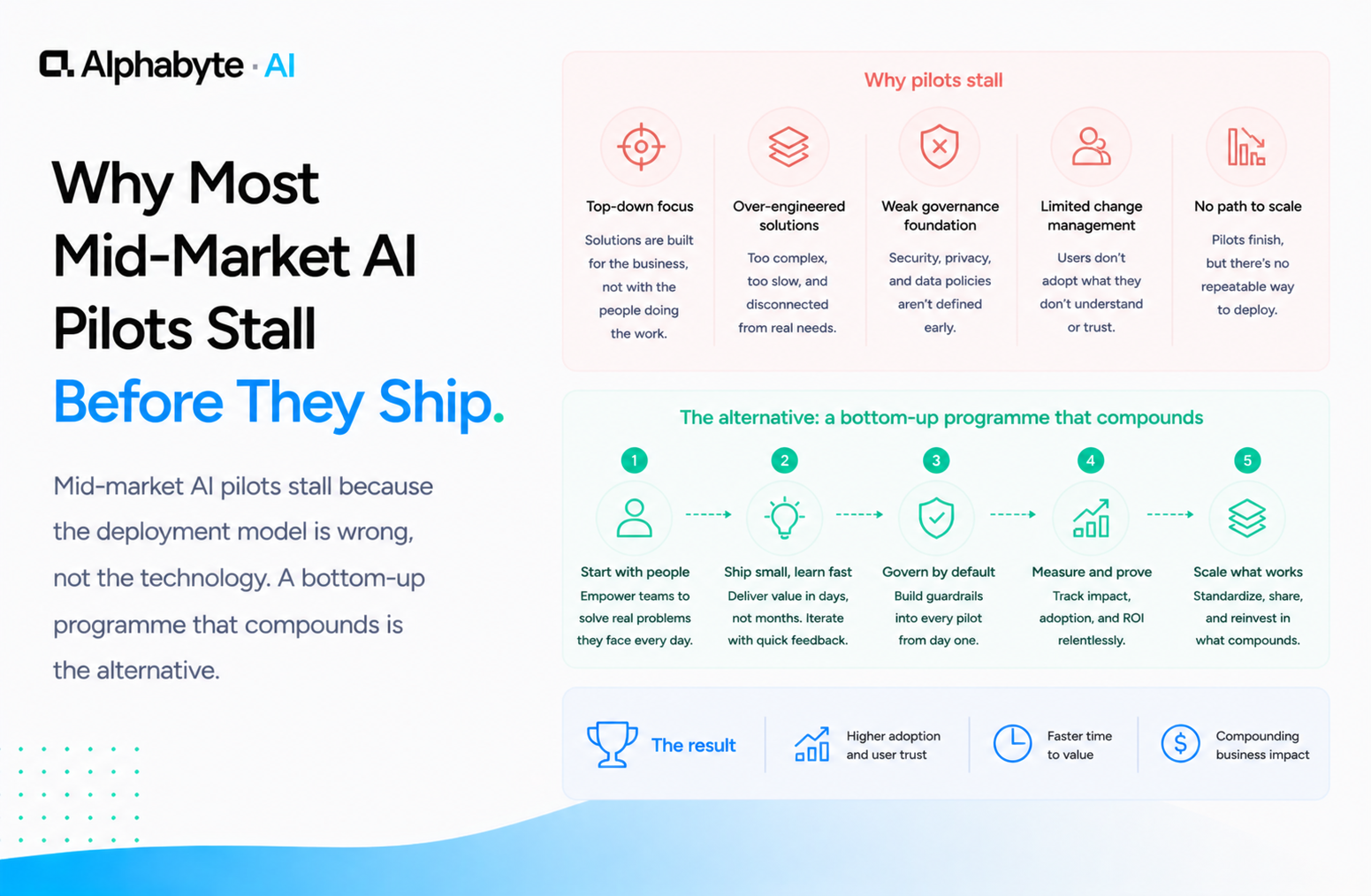

Why Most Mid-Market AI Pilots Stall Before They Ship

Mid-market AI pilots stall because the deployment model is wrong, not the technology. A bottom-up programme that compounds is the alternative.

Read more →

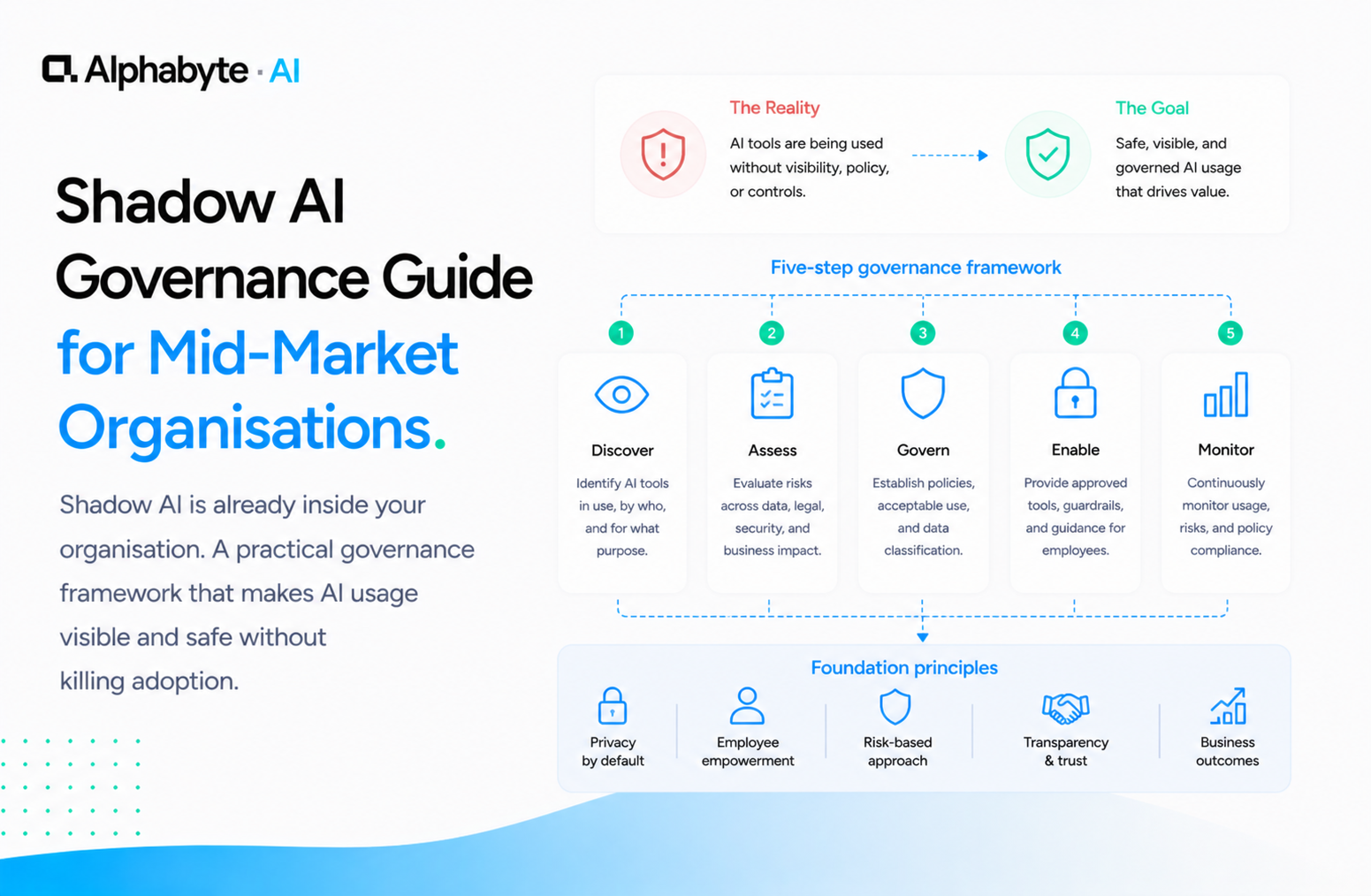

Shadow AI Governance Guide for Mid-Market Organisations

Shadow AI is already inside your organisation. A practical governance framework that makes AI usage visible and safe without killing adoption.

Read more →

Claude vs Microsoft Copilot: an honest look for 2026

Claude vs Microsoft Copilot for mid-market teams already running M365. Where each platform is stronger, when you need both, and how to decide.

Read more →