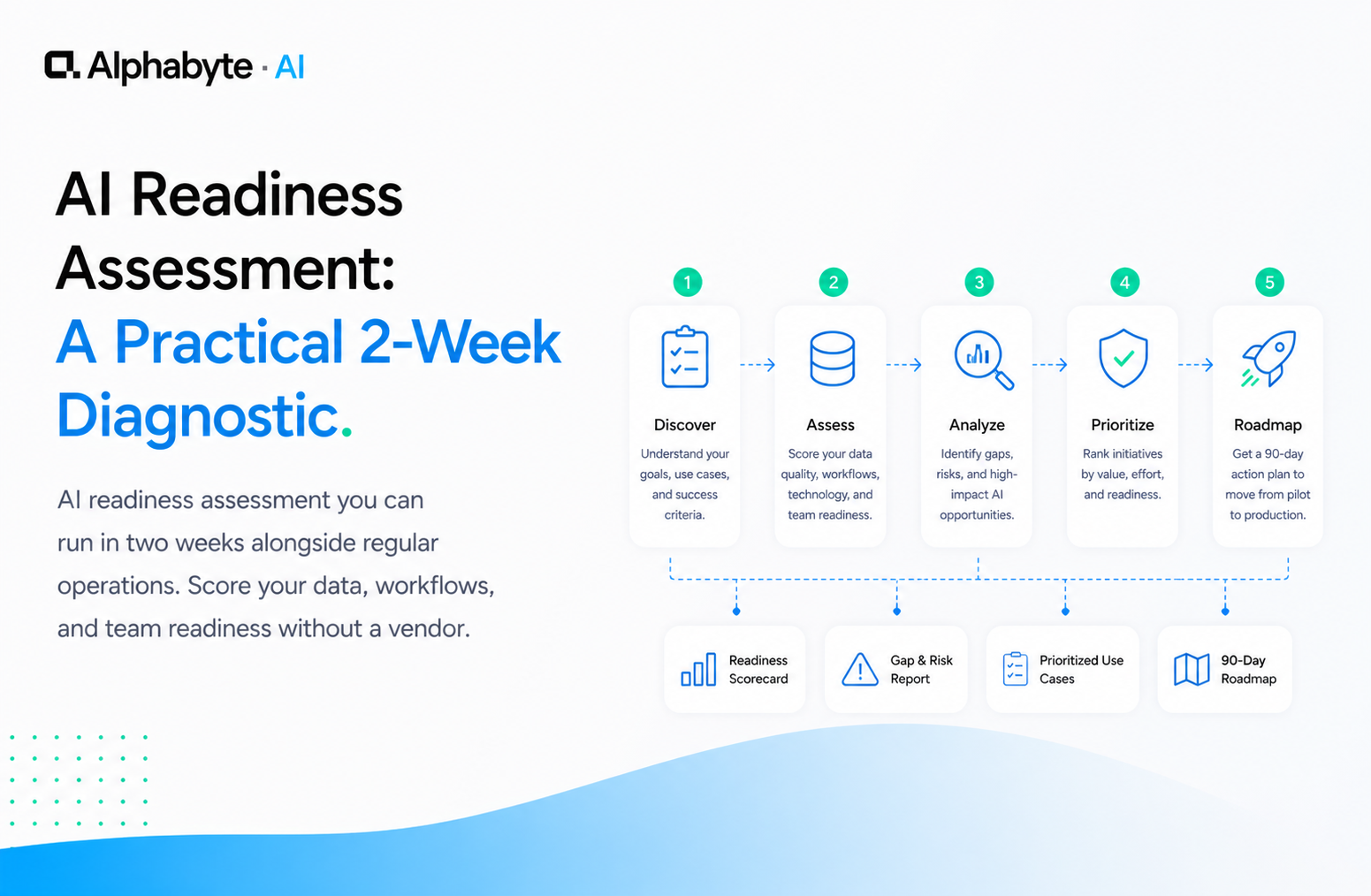

AI Readiness Assessment: A Practical 2-Week Diagnostic

AI readiness assessment you can run in two weeks alongside regular operations. Score your data, workflows, and team readiness without a vendor.

Adam Nameh

April 7, 2026 · 8 min read

Every mid-market operations leader faces the same question: is our organisation ready for AI, or are we about to waste six months learning we weren't? Most assessment frameworks were designed for enterprises with dedicated strategy teams and six-figure consulting budgets, leaving mid-market companies without a practical starting point.

An AI readiness assessment does not need to take three months or produce an 80-page deck. A structured two-week self-diagnostic, run alongside regular operations, gives you enough information to make a clear decision: move forward with a specific use case, or fix the gap that would cause a deployment to fail.

The foundation is data readiness. If the data is not ready, nothing built on it will work. This framework addresses data first, then people, then gives you a scoring model that points to a next step.

Why Do Enterprise Assessment Frameworks Fail Mid-Market Teams?

Enterprise AI readiness frameworks assume resources that mid-market organisations do not have. They expect a dedicated strategy team, three to six months of runway, and a budget large enough to justify the consulting engagement itself. The output is a research project, not a decision tool.

According to McKinsey's 2024 "The state of AI" survey, organisations that moved fastest on AI adoption were those that focused on a small number of high-impact use cases rather than comprehensive assessments.

Mid-market operations leaders are running a team, hitting quarterly targets, and trying to figure out where AI fits at the same time. You need a framework that produces a decision within two weeks. Not a roadmap that takes longer to build than the first deployment would.

In our engagements, we have seen this pattern repeatedly. A housing services organisation we worked with had spent months on a vendor-led assessment before engaging us for an AI enablement roadmap. The assessment was thorough but had not produced a single actionable recommendation.

The two-week diagnostic format we use now exists because of experiences like that one.

The goal is not to answer every question. It is to answer the first one: are we ready, or what do we need to fix?

What Does Your Data Actually Look Like?

Week one is about documenting what exists today. Not what you wish you had. Not what a vendor told you they could connect to. What is actually accessible right now.

Start with an inventory. List every system that holds operational data: your ERP, your CRM, your project management tools, your spreadsheets, your email inboxes. Most mid-market organisations have between five and 15 systems that matter.

For each system, answer four questions:

- Can you export the data? Not theoretically. Can you get a CSV or connect an API today?

- How current is the data? Real-time, daily, weekly, or "whenever someone remembers to update it"?

- What is the quality like? Are records complete? Are fields consistent? Are there obvious duplicates or gaps?

- Is the system connected to anything else, or is it isolated?

Do not try to fix anything this week. You are building a map, not a plan. Most leaders are surprised by what they find, not because the situation is worse than expected, but because they have never seen all the data sources listed in one place.

The inventory itself is valuable. It reveals which systems are genuinely connected and which ones rely on someone manually exporting a spreadsheet every Friday.

Deloitte's "State of AI in the Enterprise" survey found that data quality and data integration remain the top barriers to AI deployment across industries. Your week-one inventory tells you whether that barrier applies to your organisation.

If you want a deeper framework for evaluating data quality, our Data Readiness service uses a structured audit that scores each source on five dimensions. For this self-assessment, the four questions above are enough to separate the viable from the problematic.

Where Do People Spend Time on Repeatable Work?

Week two shifts focus from systems to humans. The goal is to map the human layer onto the data layer you documented in week one.

Start by identifying your most repetitive-task-heavy roles. Which team members spend hours each week on work that follows a predictable pattern? Data entry, report generation, invoice matching, schedule coordination, status updates across systems. These are the workflows where AI use cases tend to be highest-value and lowest-risk.

Next, find out who is already using AI. In most mid-market organisations, someone is. They are pasting data into ChatGPT to draft emails, using Copilot to write formulas, or running customer records through a free tool they found online.

This is not a problem to punish. It is a signal. It tells you where the pain is sharpest and where adoption will be fastest. Our citizen developer enablement playbook covers how to channel this energy productively rather than suppressing it. If informal AI usage concerns you from a governance perspective, our shadow AI governance post addresses that directly.

Finally, assess comfort level. You need at least a few people on your team who are willing to test, give feedback, and iterate. If your entire team is resistant, your first project is not an AI deployment. It is education. Our executive enablement programme is designed for exactly that situation.

By the end of week two, you should have a clear picture: here is our data, here is where people spend their time on repeatable work, and here is how ready the team is to try something new.

How Should You Score and Interpret Your Results?

Score yourself on three dimensions, each on a scale of one to five.

Data readiness. A one means your data is scattered, inconsistent, and inaccessible. A five means your data is clean, connected, and exportable via API.

Workflow complexity. A one means your target workflows are highly variable with constant exceptions. A five means they are predictable, pattern-based, and well-documented.

Team readiness. A one means no AI experience and active resistance. A five means multiple team members already experimenting and open to change.

If you score three or higher on all three dimensions, you are ready to identify specific AI use cases and start building an AI roadmap. The question is not whether to deploy, but where to start.

If one dimension is below three, that is your starting point. A low data readiness score means you need a data cleanup project before any AI work makes sense. A low workflow score means you need to simplify and document your processes first. A low team score means you need training and exposure before deploying anything.

This is not a grade. It is a map. It tells you where you are and what comes next. Gartner's Hype Cycle for AI consistently notes that organisations with structured readiness assessments are more likely to move past the pilot stage into production deployment.

When Does Outside Help Make Sense?

The two-week assessment is designed to be self-directed. A VP of Operations or a Director of IT can run it without outside help. But there are situations where bringing in a partner makes sense.

If your data is fragmented across many systems with no integration layer, scoping the cleanup is hard to do from the inside. You are too close to the workarounds you have built over the years. An outside perspective helps identify what is essential and what is accumulated complexity. We built a custom MCP server for a construction firm in exactly this situation, connecting regulatory compliance records that had been spread across multiple disconnected systems.

If your team has no AI experience at all, the gap between "we scored ourselves" and "we know what to build" can be wide. A structured Discovery engagement compresses that gap. It takes the self-assessment output and turns it into a prioritised list of use cases with effort estimates and expected impact.

If you are in a regulated industry, governance requirements add a layer of complexity. What data can go into an AI system? What decisions can AI inform versus make? Canadian organisations working under PIPEDA or preparing for Bill C-27 need to answer these questions with specific compliance implications in mind. Our AI governance framework walks through the practical steps.

The point of the self-assessment is not to answer every question. It is to answer the first one: are we ready, or what do we need to fix? If the answer is clear, the Discovery service is where most organisations go next.

Frequently Asked Questions

How long does an AI readiness assessment take?

A practical AI readiness assessment can be completed in two weeks alongside regular operations. Week one covers data and systems inventory. Week two covers people, workflows, and team readiness. No dedicated strategy team or consulting budget is required.

What are the three dimensions of AI readiness?

The three dimensions are data readiness (quality, accessibility, and structure of your operational data), workflow complexity (how predictable and well-documented your target processes are), and team readiness (whether your people have AI experience and openness to new tools).

Do I need a consultant to assess AI readiness?

No. A VP of Operations or Director of IT can run a self-directed assessment using a structured framework. Outside help becomes valuable when data is fragmented across many systems, when the team has no AI experience, or when regulatory requirements add governance complexity.

What score means we are ready to deploy AI?

If you score three or higher on all three dimensions (data readiness, workflow complexity, and team readiness) on a one-to-five scale, you are ready to identify specific use cases and build a deployment roadmap. Any dimension below three becomes your starting point before AI work begins.

Adam Nameh

Co-Founder & Data Platform Architect. Adam Nameh is the Co-Founder of Alphabyte Solutions Inc., a Toronto-based data and AI consulting firm that has helped over 100 clients across North America transform complex data environments into actionable business intelligence. With a decade of hands-on experience in data architecture and platform design, Adam works directly with leadership teams to deliver practical AI and data solutions that drive real business outcomes.

View full profile →More from the blog

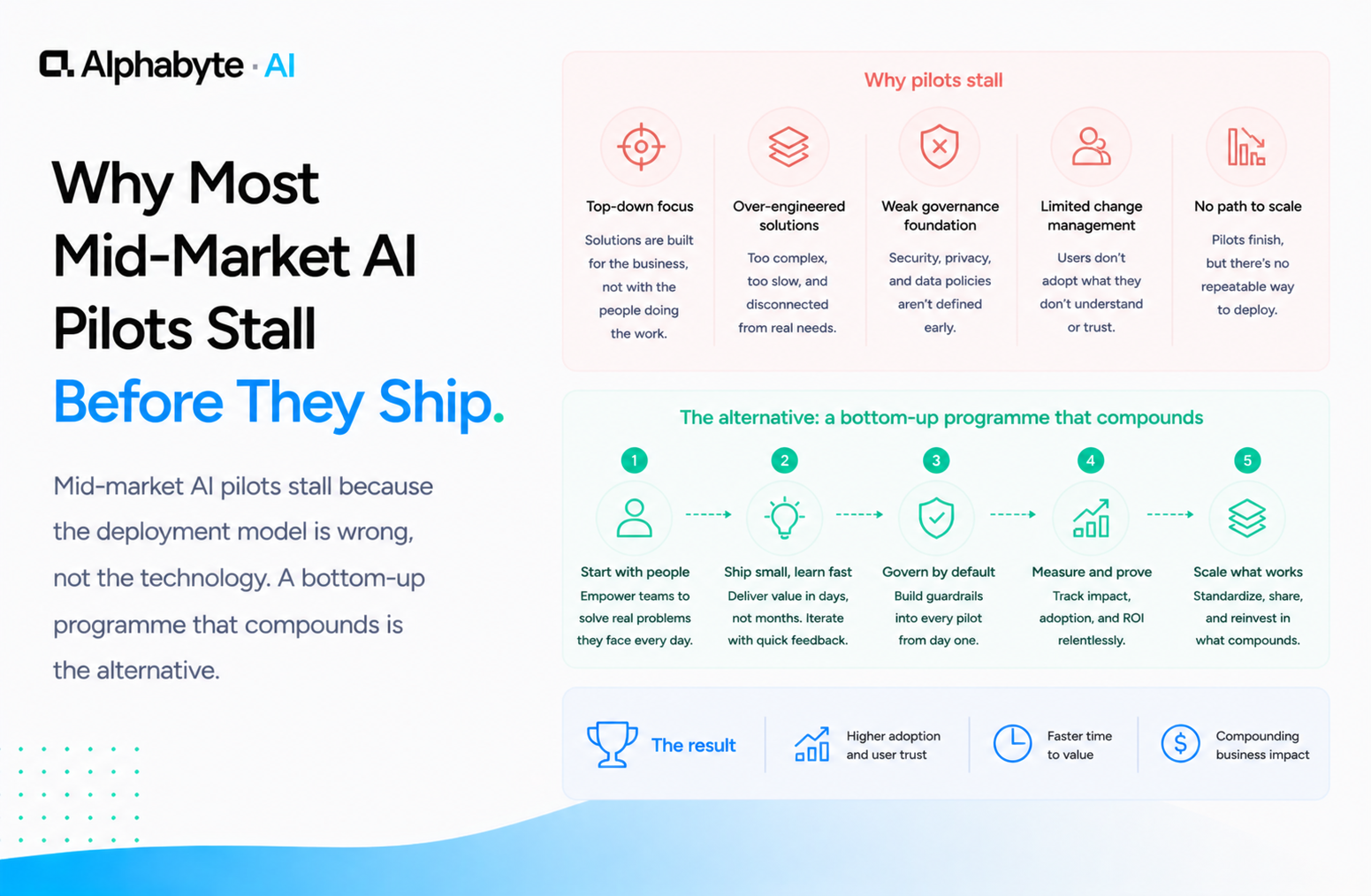

Why Most Mid-Market AI Pilots Stall Before They Ship

Mid-market AI pilots stall because the deployment model is wrong, not the technology. A bottom-up programme that compounds is the alternative.

Read more →

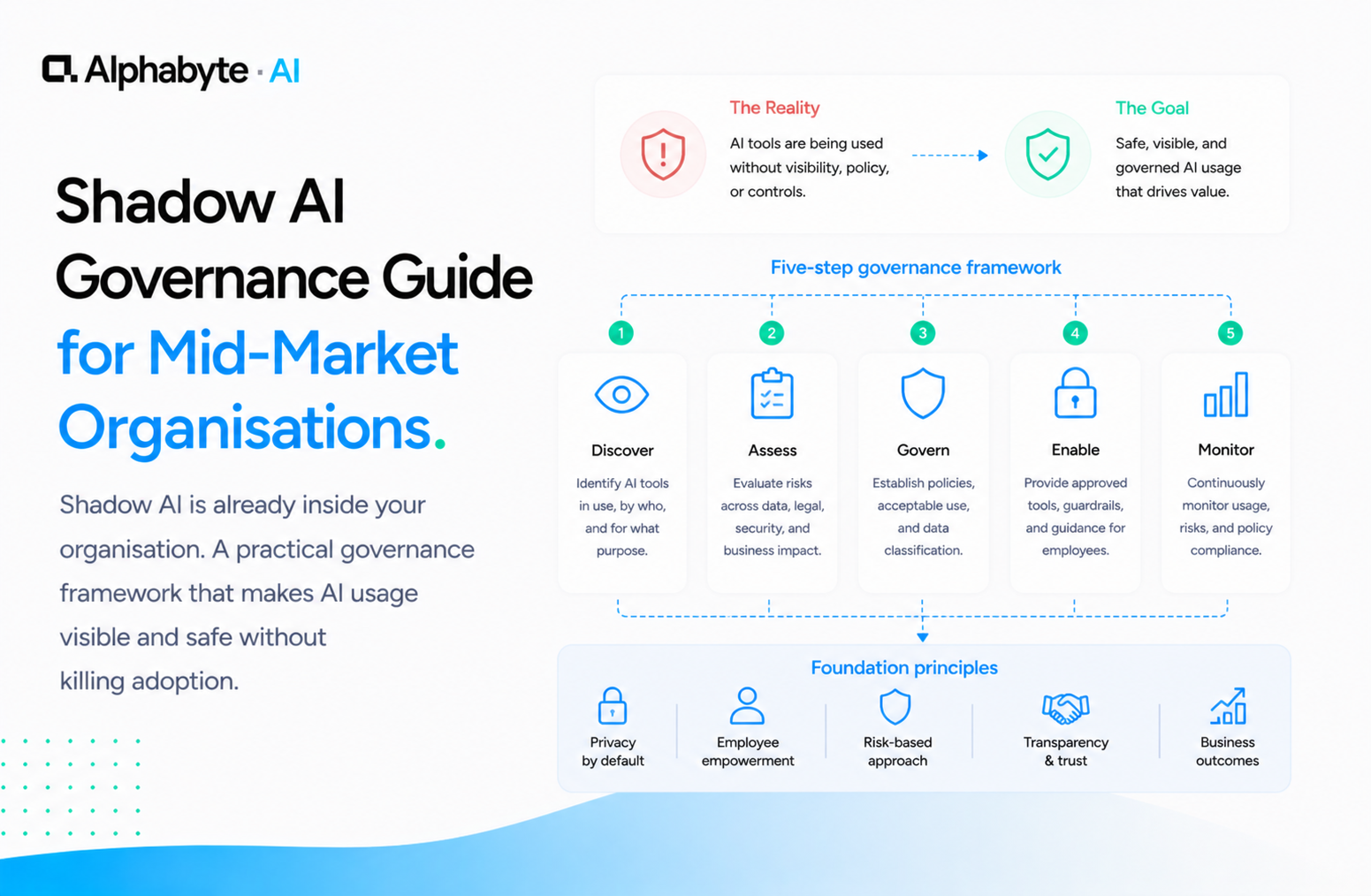

Shadow AI Governance Guide for Mid-Market Organisations

Shadow AI is already inside your organisation. A practical governance framework that makes AI usage visible and safe without killing adoption.

Read more →

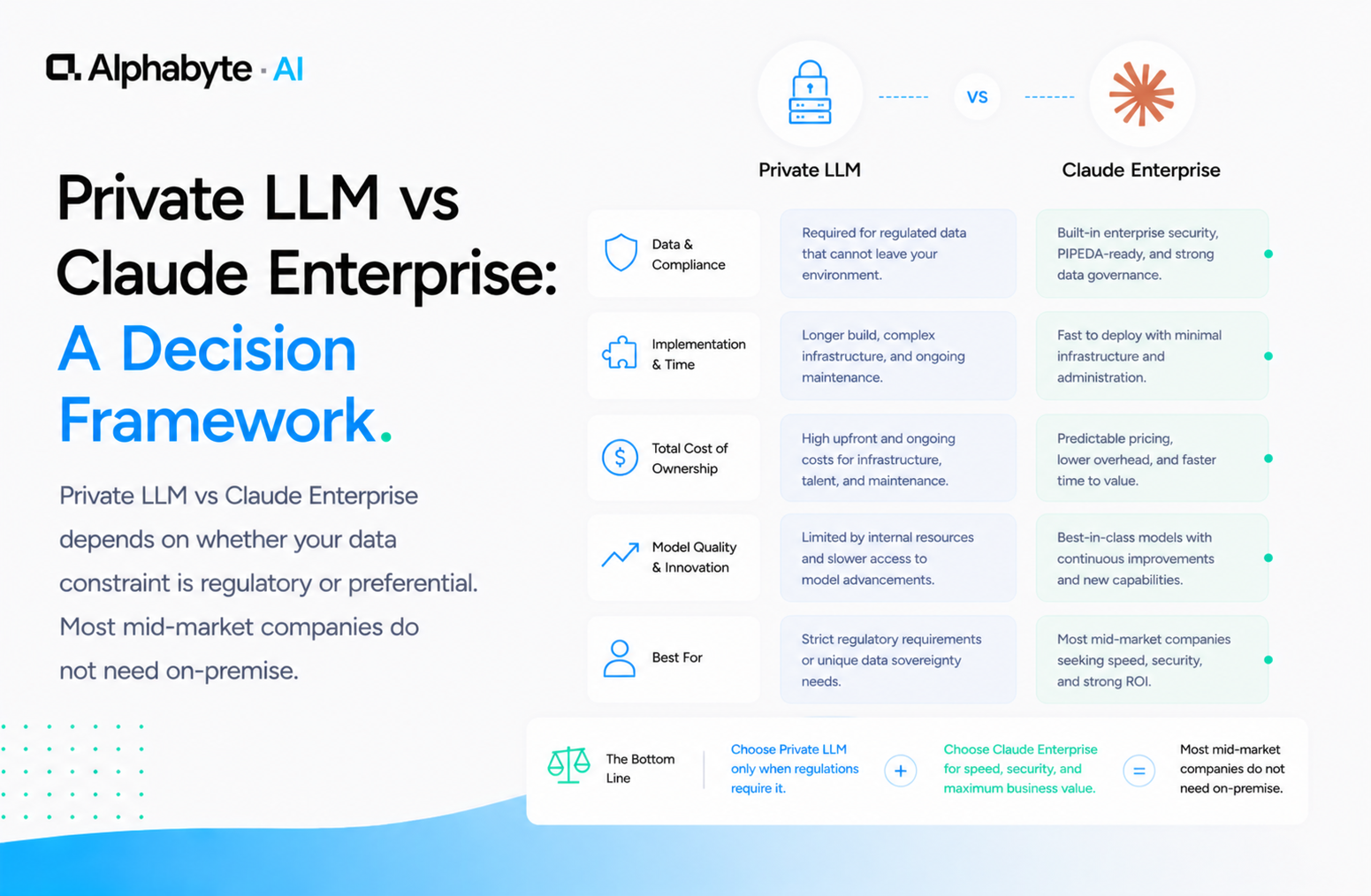

Private LLM vs Claude Enterprise: A Decision Framework

Private LLM vs Claude Enterprise depends on whether your data constraint is regulatory or preferential. Most mid-market companies do not need on-premise.

Read more →